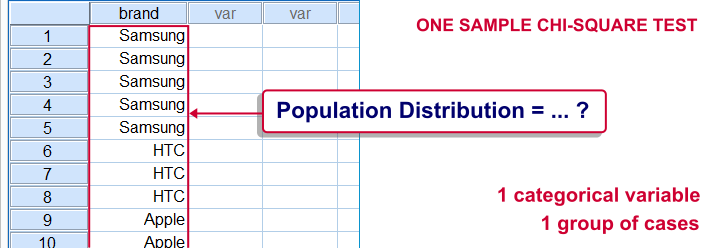

SPSS one-sample chi-square test is used to test whether a single categorical variable follows a hypothesized population distribution.

SPSS One-Sample Chi-Square Test Example

A marketeer believes that 4 smartphone brands are equally attractive. He asks 43 people which brand they prefer, resulting in brands.sav. If the brands are really equally attractive, each brand should be chosen by roughly the same number of respondents. In other words, the expected frequencies under the null hypothesis are (43 cases / 4 brands =) 10.75 cases for each brand. The more the observed frequencies differ from these expected frequencies, the less likely it is that the brands really are equally attractive.

1. Quick Data Check

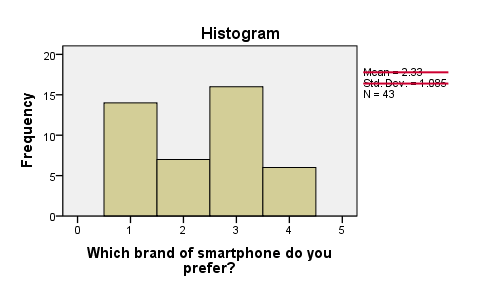

Before running any statistical tests, we always want to have an idea what our data basically look like. In this case we'll inspect a histogram of the preferred brand by running FREQUENCIES. We'll open the data file and create our histogram by running the syntax below. Since it's very simple, we won't bother about clicking through the menu here.

cd 'd:/downloaded'. /*Or wherever data file is located.

*2. Open data file.

get file 'brands.sav'.

*3. Inspect data.

frequencies brand/histogram.

First, N = 43 means that the histogram is based on 43 cases. Since this is our sample size, we conclude that no missing values are present. SPSS also calculates a mean and standard deviation but these are not meaningful for nominal variables so we'll just ignore them. Second, the preferred brands have rather unequal frequencies, casting some doubt upon the null hypothesis of those being equal in the population.

Assumptions One-Sample Chi-Square Test

- independent and identically distributed variables (or “independent observations”);

- none of the expected frequencies are < 5;

The first assumption is beyond the scope of this tutorial. We'll presume it's been met by our data. Whether the assumption 2 holds is reported by SPSS whenever we run a one-sample chi-square test. However, we already saw that all expected frequencies are 10.75 for our data.

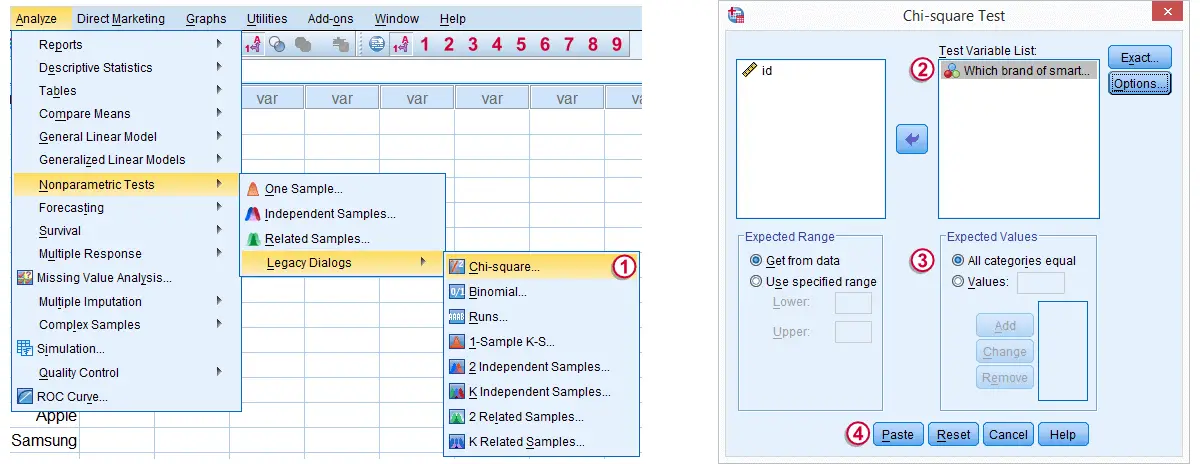

3. Run SPSS One Sample Chi-Square Test

Expected Values refers to the expected frequencies, the aforementioned 10.75 cases for each brand. We could enter these values but selecting is a faster option and yields identical results.

Expected Values refers to the expected frequencies, the aforementioned 10.75 cases for each brand. We could enter these values but selecting is a faster option and yields identical results.

Clicking results in the syntax below.

Clicking results in the syntax below.

cd 'd:/downloaded'. /*Or wherever data file is located.

*2. Open data file.

get file 'chosen_holiday.sav'.

*3. Chi square test (pasted from Analyze - Nonparametric Tests - Legacy Dialogs - Chi-square).

NPAR TESTS

/CHISQUARE=chosen_holiday

/EXPECTED=EQUAL

/MISSING ANALYSIS.

4. SPSS One-Sample Chi-Square Test Output

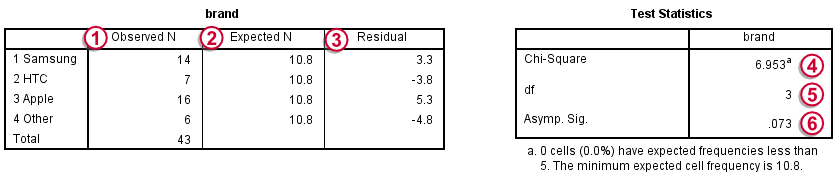

Under Observed N we find the observed frequencies that we saw previously;

Under Observed N we find the observed frequencies that we saw previously;

under Expected N we find the theoretically expected frequencies;They're shown as 10.8 instead of 10.75 due to rounding. All reported decimals can be seen by double-clicking the value.

under Expected N we find the theoretically expected frequencies;They're shown as 10.8 instead of 10.75 due to rounding. All reported decimals can be seen by double-clicking the value.

for each frequency the Residual is the difference between the observed and the expected frequency and thus expresses a deviation from the null hypothesis;

for each frequency the Residual is the difference between the observed and the expected frequency and thus expresses a deviation from the null hypothesis;

the Chi-Square test statistic sort of summarizes the residuals and hence indicates the overall difference between the data and the hypothesis. The larger the chi-square value, the less the data “fit” the null hypothesis;

the Chi-Square test statistic sort of summarizes the residuals and hence indicates the overall difference between the data and the hypothesis. The larger the chi-square value, the less the data “fit” the null hypothesis;

degrees of freedom (df) specifies which chi-square distribution applies;

degrees of freedom (df) specifies which chi-square distribution applies;

Asymp. Sig. refers to the p value and is .073 in this case. If the brands are exactly equally attractive in the population, there's a 7.3% chance of finding our observed frequencies or a larger deviation from the null hypothesis. We usually reject the null hypothesis if p < .05. Since this is not the case, we conclude that the brands are equally attractive in the population.

Asymp. Sig. refers to the p value and is .073 in this case. If the brands are exactly equally attractive in the population, there's a 7.3% chance of finding our observed frequencies or a larger deviation from the null hypothesis. We usually reject the null hypothesis if p < .05. Since this is not the case, we conclude that the brands are equally attractive in the population.

Reporting a One-Sample Chi-Square Test

When reporting a one-sample chi-square test, we always report the observed frequencies. The expected frequencies usually follow readily from the null hypothesis so reporting them is optional. Regarding the significance test, we usually write something like “we could not demonstrate that the four brands are not equally attractive; χ2(3) = 6.95, p = .073.”

SPSS TUTORIALS

SPSS TUTORIALS

THIS TUTORIAL HAS 13 COMMENTS:

By Ruben Geert van den Berg on June 3rd, 2016

Thanks for your comment! I think that's a good suggestion and I'll take it into account when I'll update the tutorial - which may take another few weeks or so.

By Heather Scheidt on October 10th, 2016

I can't figure out how to change the significance level for this kind of test

By Ruben Geert van den Berg on October 10th, 2016

Hi Heather! The "significance level" is basically just the desired p-value to which you compare the actual p-value in your output. There's no changing or setting it.

Let's say you find p = 0.0136 in your output. If you want to test for 0.01 significance, you conclude that your p-value is larger than 0.01 so the association is not statistically significant. However, if you choose 0.05 as your significance level, the observed 0.0136 is smaller than that so in this case the association is statistically significant.

Personally, I strongly prefer simply reporting the p-value and a basic conclusion. I think it's nonsensical to suggest that a p-value of 0.0499 is 100% "significant" while a p-value of 0.0501 is 100% insignificant. But I'm aware that you'll sometimes have to conform to such nonsense, unfortunately.

Hope that helps!

By Sichilima John on November 24th, 2016

The tutorial is good and well understandable

By Ray on April 18th, 2017

Great tutorial! Thank you! I do have a question... if in the example you provided p < 0.05 (significant) what steps could be taken, if any, to determine which of the 4 smartphone brands was unequally attractive? In other words, which of the smartphone brands had an observed outcome that was significantly different from the expected outcome? Or, is this not possible to determine and is this technique only able to indicate that unequal attractiveness exists? Thanks!